Responsible AI (RAI) refers to designing and deploying AI systems that are transparent, unbiased, accountable, and follow ethical guidelines. As AI systems become more robust and prevalent, ensuring they are developed responsibly and following safety and ethical guidelines is essential.

Health, Transportation, Network Management, and Surveillance are safety-critical AI applications where system failure can have severe consequences. Big firms are aware that RAI is essential for mitigating technology risks. Yet according to an MIT Sloan/BCG report that included 1093 respondents, 54% of companies lacked Responsible AI expertise and talent.

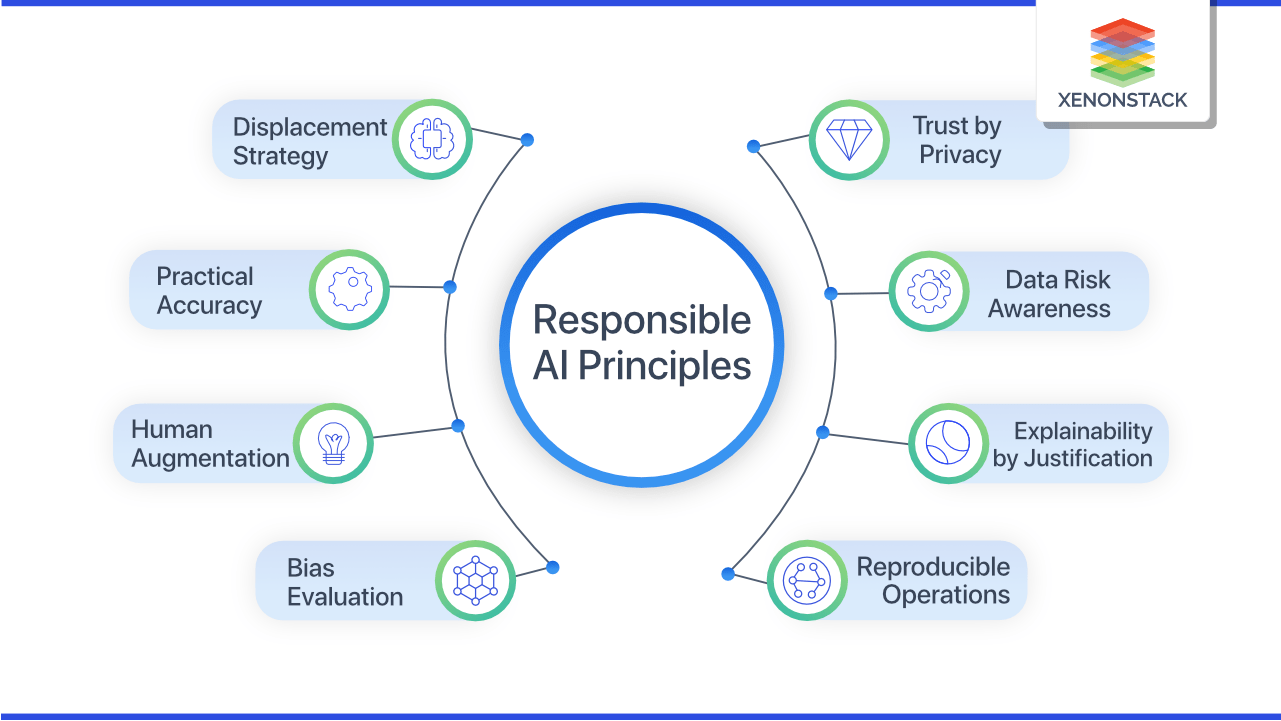

Although thought leaders and organizations have developed principles for responsible AI, ensuring the responsible development of AI systems still presents challenges. Let’s explore this idea in detail:

5 Principles for Responsible AI

1. Fairness

Technologists should design procedures so that AI systems treat all individuals and groups fairly without bias. Hence, fairness is the primary requirement in high-risk decision-making applications.

Fairness is defined as:

“Examining the impact on various demographic groups and choosing one of several mathematical definitions of group fairness that will adequately satisfy the desired set of legal, cultural, and ethical requirements.”

2. Accountability

Accountability means individuals and organizations developing and deploying AI systems should be responsible for their decisions and actions. The team deploying AI systems should ensure that their AI system is transparent, interpretable, auditable, and does not harm society.

Accountability includes seven components:

- Context (purpose for which accountability is required)

- Range (subject of accountability)

- Agent (who is accountable?)

- Forum (to whom the responsible party must report)

- Standards (criteria for accountability)

- Process (method of accountability)

- Implications (consequences of accountability)

3. Transparency

Transparency means that the reason behind decision-making in AI systems is clear and understandable. Transparent AI systems are explainable.

According to Assessment List for Trustworthy Artificial Intelligence (ALTAI), transparency has three key elements:

- Traceability (the data, preprocessing steps, and model is accessible)

- Explainability (the reasoning behind decision-making/prediction is clear)

- Open Communication (regarding the limitation of the AI system)

4. Privacy

Privacy is one of the main principles of responsible AI. It refers to the protection of personal information. This principle ensures that people’s personal information is collected and processed with consent and kept out of the hands of malcontents.

As evidenced recently, there was a case of Clearview, a company that makes facial recognition models for law enforcement and universities. UK’s data watchdogs sued Clearview AI for £ 7.5 million for collecting images of UK residents from social media without consent to create a database of 20bn images.

5. Security

Security means ensuring that AI systems are secure and not threatening society. An example of an AI security threat is adversarial attacks. These malicious attacks trick ML models into making incorrect decisions. Protecting AI systems from cyber attacks is imperative for responsible AI.

4 Major Challenges & Risks of Responsible AI

1. Bias

Human biases related to age, gender, nationality, and race can impact data collection, potentially leading to biased AI models. US Department of Commerce study found that facial recognition AI misidentifies people of color. Hence, using AI for facial recognition in law enforcement can lead to wrongful arrests. Also, making fair AI models is challenging because there are 21 different parameters to define them. So, there is a trade-off; satisfying one fair AI parameter means sacrificing another.

2. Interpretability

Interpretability is a critical challenge in developing responsible AI. It refers to understanding how the machine learning model has reached a particular conclusion.

Deep neural networks lack interpretability because they operate as Black Boxes with multiple layers of hidden neurons, making it difficult to understand the decision-making process. This can be a challenge in high-stakes decision-making such as healthcare, finance, etc.

Moreover, formalizing interpretability in ML models is challenging because it is subjective and domain-specific.

3. Governance

Governance refers to a set of rules, policies, and procedures that oversee the development and deployment of AI systems. Recently, there has been significant progress in AI governance discourse, with organizations presenting frameworks and ethical guidelines.

Ethics guidelines for trustworthy AI by the EU, Australian AI Ethics Framework, and OECD AI Principles are examples of AI governance frameworks.

But the rapid advancement in AI in recent years can outpace these AI governance frameworks. To this end, there needs to be a framework that assesses the fairness, interpretability, and ethics of AI systems.

4. Regulation

As AI systems get more prevalent, there needs to be regulation to consider ethical and societal values. Developing regulation that does not stifle AI innovation is a critical challenge in responsible AI.

Even with General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and the Personal Information Protection Law (PIPL) as regulatory bodies, AI researchers found that 97% of EU websites fail to comply with GDPR legal framework requirements.

Moreover, legislators face a significant challenge in reaching a consensus on the definition of AI that includes both classical AI systems and the latest AI applications.

3 Major Benefits of Responsible AI

1. Reduced Bias

Responsible AI reduces bias in decision-making processes, building trust in AI systems. Reducing bias in AI systems can provide a fair and equitable healthcare system and reduces bias in AI-based financial services etc.

2. Enhanced Transparency

Responsible AI makes transparent AI applications that build trust in AI systems. Transparent AI systems decrease the risk of error and misuse. Enhanced transparency makes auditing AI systems easier, wins stakeholders’ trust, and can lead to accountable AI systems.

3. Better Security

Secure AI applications ensure data privacy, produce trustworthy and harmless output, and are safe from cyber-attacks.

Tech giants like Microsoft and Google, which are at the forefront of developing AI systems, have developed Responsible AI principles. Responsible AI ensures that the innovation in AI isn’t harmful to individuals and society.

Thought leaders, researchers, organizations, and legal authorities should continuously revise responsible AI literature to ensure a safe future for AI innovation.

For more AI-related content, visit unite.ai.

The post What is Responsible AI? Principles, Challenges, & Benefits appeared first on Unite.AI.