The OWASP Top 10 for LLM (Large Language Model) Applications version 1.0 is out, it focuses on the potential security risks when using LLMs.

OWASP released the OWASP Top 10 for LLM (Large Language Model) Applications project, which provides a list of the top 10 most critical vulnerabilities impacting LLM applications.

The project aims to educate developers, designers, architects, managers, and organizations about the security issues when deploying Large Language Models (LLMs).

The organization is committed to raising awareness of the vulnerabilities and providing recommendations for hardening LLM applications.

“The OWASP Top 10 for LLM Applications Working Group is dedicated to developing a Top 10 list of vulnerabilities specifically applicable to applications leveraging Large Language Models (LLMs).” reads the announcement of the Working Group. “This initiative aligns with the broader goals of the OWASP Foundation to foster a more secure cyberspace and is in line with the overarching intention behind all OWASP Top 10 lists.”

The organization states that the primary audience for its Top 10 is developers and security experts who design and implement LLM applications. However the project could be interest to other stakeholders in the LLM ecosystem, including scholars, legal professionals, compliance officers, and end users.

“The goal of this Working Group is to provide a foundation for developers to create applications that include LLMs, ensuring these can be used securely and safely by a wide range of entities, from individuals and companies to governments and other organizations.” continues the announcement.

The Top Ten is the result of the work of nearly 500 security specialists, AI researchers, developers, industry leaders, and academics. Over 130 of these experts actively contributed to this guide.

Clearly the project is a work in progress, LLM technology continues to evolve, and the research on security risk will need to keep pace.

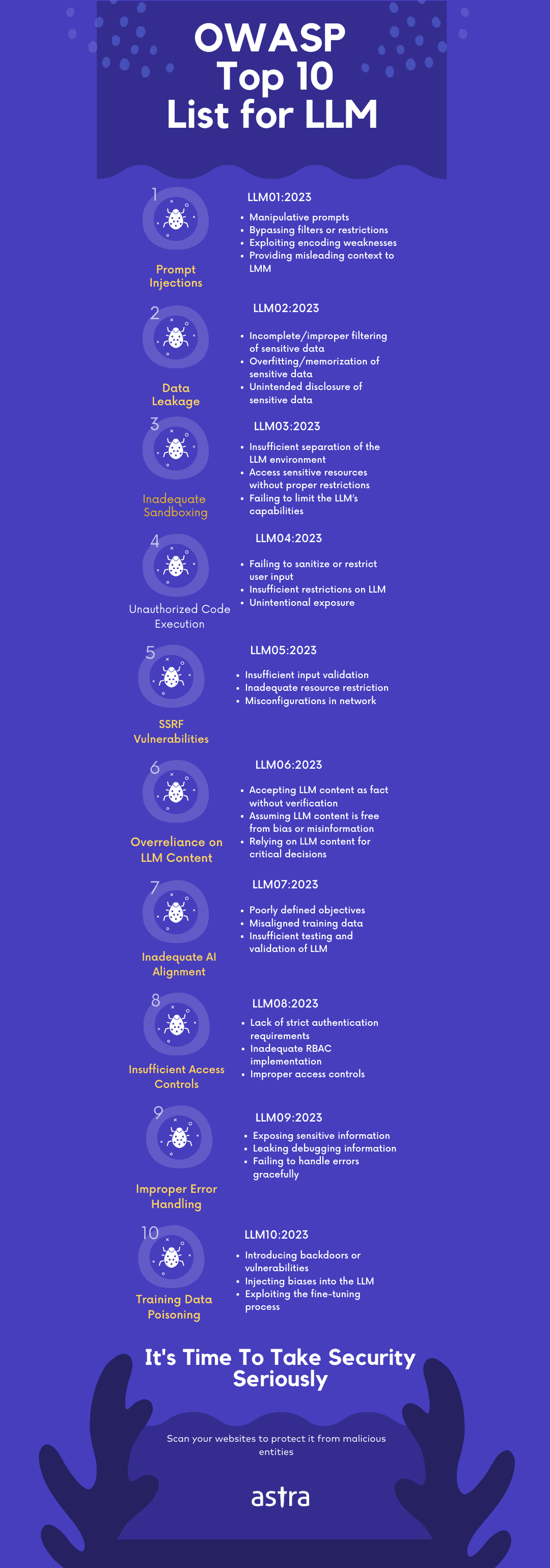

Below is the Owasp Top 10 for LLM version 1.0

LLM01: Prompt Injection

This manipulates a large language model (LLM) through crafty inputs, causing unintended actions by the LLM. Direct injections overwrite system prompts, while indirect ones manipulate inputs from external sources.

LLM02: Insecure Output Handling

This vulnerability occurs when an LLM output is accepted without scrutiny, exposing backend systems. Misuse may lead to severe consequences like XSS, CSRF, SSRF, privilege escalation, or remote code execution.

LLM03: Training Data Poisoning

This occurs when LLM training data is tampered, introducing vulnerabilities or biases that compromise security, effectiveness, or ethical behavior. Sources include Common Crawl, WebText, OpenWebText, & books.

LLM04: Model Denial of Service

Attackers cause resource-heavy operations on LLMs, leading to service degradation or high costs. The vulnerability is magnified due to the resource-intensive nature of LLMs and unpredictability of user inputs.

LLM05: Supply Chain Vulnerabilities

LLM application lifecycle can be compromised by vulnerable components or services, leading to security attacks. Using third-party datasets, pre-trained models, and plugins can add vulnerabilities.

LLM06: Sensitive Information Disclosure

LLM’s may inadvertently reveal confidential data in its responses, leading to unauthorized data access, privacy violations, and security breaches. It’s crucial to implement data sanitization and strict user policies to mitigate this.

LLM07: Insecure Plugin Design

LLM plugins can have insecure inputs and insufficient access control. This lack of application control makes them easier to exploit and can result in consequences like remote code execution.

LLM08: Excessive Agency

LLM-based systems may undertake actions leading to unintended consequences. The issue arises from excessive functionality, permissions, or autonomy granted to the LLM-based systems.

LLM09: Overreliance

Systems or people overly depending on LLMs without oversight may face misinformation, miscommunication, legal issues, and security vulnerabilities due to incorrect or inappropriate content generated by LLMs.

LLM10: Model Theft

This involves unauthorized access, copying, or exfiltration of proprietary LLM models. The impact includes economic losses, compromised competitive advantage, and potential access to sensitive information.

The organization invites experts to join it and provide support to the project.

You can currently download version 1.0 in two formats. The full PDF and the abridged slide format.

Follow me on Twitter: @securityaffairs and Facebook and Mastodon

(SecurityAffairs – hacking, OWASP Top 10 for LLM)

The post OWASP Top 10 for LLM (Large Language Model) applications is out! appeared first on Security Affairs.