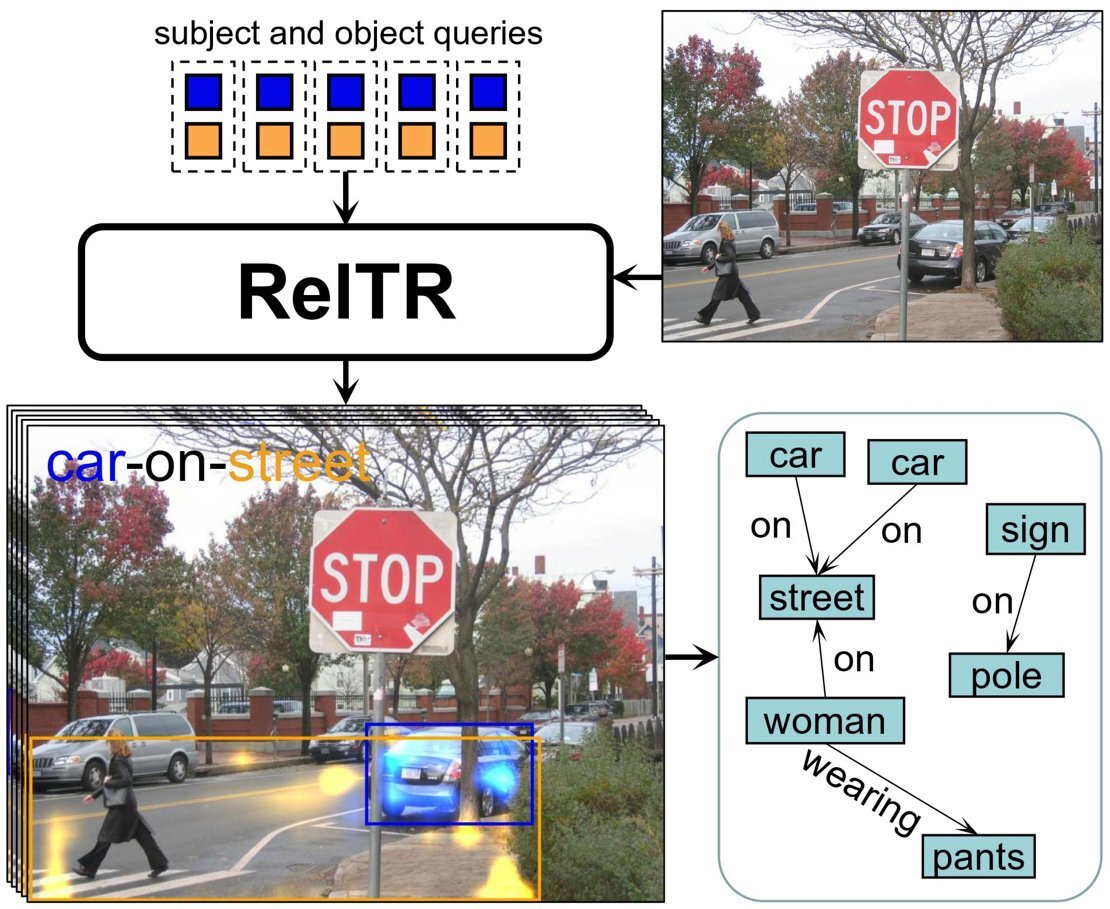

New AI method for graphing scenes from images

Generative AI programs can generate images from textual prompts. These models work best when they generate images of single objects. Creating complete scenes is still difficult. Michael Ying Yang, a UT-researcher from the faculty of ITC recently developed a novel method that can graph scenes from images that can serve as a blueprint for generating realistic and coherent images. He and his team recently published their findings in the journal IEEE Transactions on Pattern Analysis and Machine Intelligence.