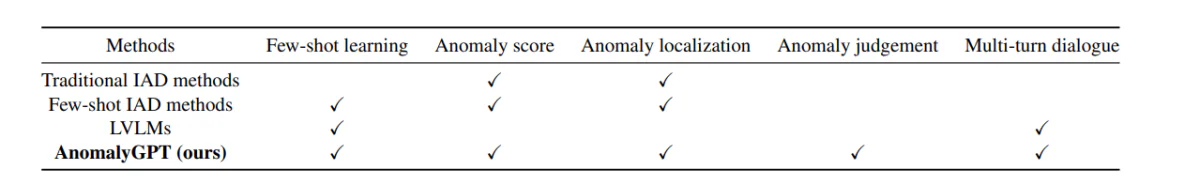

Recently, Large Vision Language Models (LVLMs) such as LLava and MiniGPT-4 have demonstrated the ability to understand images and achieve high accuracy and efficiency in several visual tasks. While LVLMs excel at recognizing common objects due to their extensive training datasets, they lack specific domain knowledge and have a limited understanding of localized details within images. This limits their effectiveness in Industrial Anomaly Detection (IAD) tasks. On the other hand, existing IAD frameworks can only identify sources of anomalies and require manual threshold settings to distinguish between normal and anomalous samples, thereby restricting their practical implementation.

The primary purpose of an IAD framework is to detect and localize anomalies in industrial scenarios and product images. However, due to the unpredictability and rarity of real-world image samples, models are typically trained only on normal data. They differentiate anomalous samples from normal ones based on deviations from the typical samples. Currently, IAD frameworks and models primarily provide anomaly scores for test samples. Moreover, distinguishing between normal and anomalous instances for each class of items requires the manual specification of thresholds, rendering them unsuitable for real-world applications.

To explore the use and implementation of Large Vision Language Models in addressing the challenges posed by IAD frameworks, AnomalyGPT, a novel IAD approach based on LVLM, was introduced. AnomalyGPT can detect and localize anomalies without the need for manual threshold settings. Furthermore, AnomalyGPT can also offer pertinent information about the image to engage interactively with users, allowing them to ask follow-up questions based on the anomaly or their specific needs.

Industry Anomaly Detection and Large Vision Language Models

Existing IAD frameworks can be categorized into two categories.

- Reconstruction-based IAD.

- Feature Embedding-based IAD.

In a Reconstruction-based IAD framework, the primary aim is to reconstruct anomaly samples to their respective normal counterpart samples, and detect anomalies by reconstruction error calculation. SCADN, RIAD, AnoDDPM, and InTra make use of the different reconstruction frameworks ranging from Generative Adversarial Networks (GAN) and autoencoders, to diffusion model & transformers.

On the other hand, in a Feature Embedding-based IAD framework, the primary motive is to focus on modeling the feature embedding of normal data. Methods like PatchSSVD tries to find a hypersphere that can encapsulate normal samples tightly, whereas frameworks like PyramidFlow and Cfl project normal samples onto a Gaussian distribution using normalizing flows. CFA and PatchCore frameworks have established a memory bank of normal samples from patch embeddings, and use the distance between the test sample embedding normal embedding to detect anomalies.

Both these methods follow the “one class one model”, a learning paradigm that requires a large amount of normal samples to learn the distributions of each object class. The requirement for a large amount of normal samples make it impractical for novel object categories, and with limited applications in dynamic product environments. On the other hand, the AnomalyGPT framework makes use of an in-context learning paradigm for object categories, allowing it to enable interference only with a handful of normal samples.

Moving ahead, we have Large Vision Language Models or LVLMs. LLMs or Large Language Models have enjoyed tremendous success in the NLP industry, and they are now being explored for their applications in visual tasks. The BLIP-2 framework leverages Q-former to input visual features from Vision Transformer into the Flan-T5 model. Furthermore, the MiniGPT framework connects the image segment of the BLIP-2 framework and the Vicuna model with a linear layer, and performs a two-stage finetuning process using image-text data. These approaches indicate that LLM frameworks might have some applications for visual tasks. However, these models have been trained on general data, and they lack the required domain-specific expertise for widespread applications.

How Does AnomalyGPT Work?

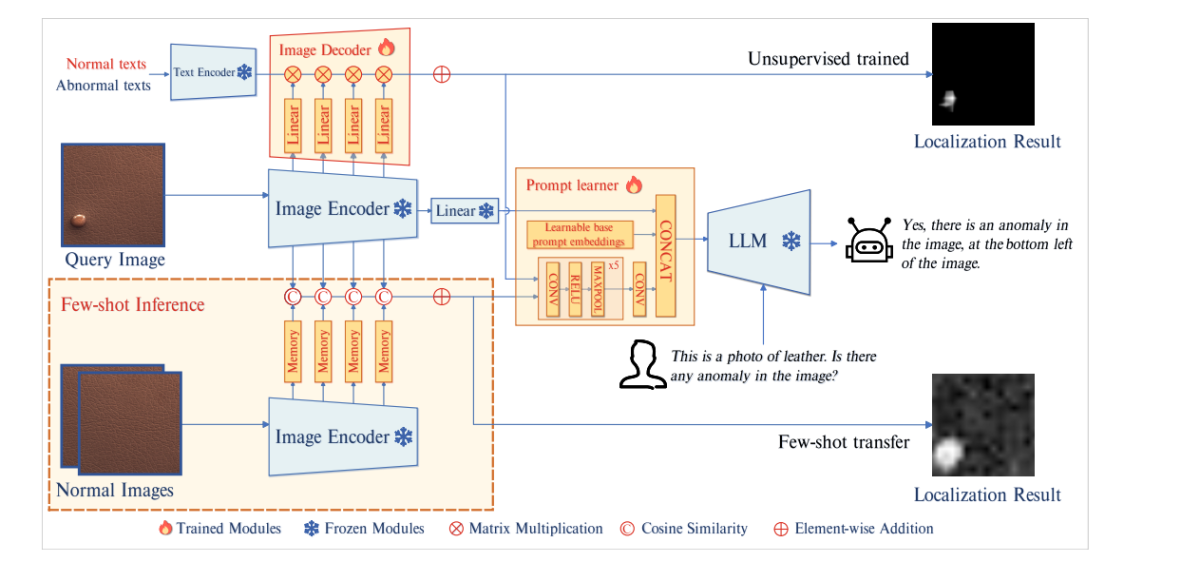

AnomalyGPT at its core is a novel conversational IAD large vision language model designed primarily for detecting industrial anomalies and pinpointing their exact location using images. The AnomalyGPT framework uses a LLM and a pre-trained image encoder to align images with their corresponding textual descriptions using stimulated anomaly data. The model introduces a decoder module, and a prompt learner module to enhance the performance of the IAD systems, and achieve pixel-level localization output.

Model Architecture

The above image depicts the architecture of AnomalyGPT. The model first passes the query image to the frozen image encoder. The model then extracts patch-level features from the intermediate layers, and feeds these features to an image decoder to compute their similarity with abnormal and normal texts to obtain the results for localization. The prompt learner then converts them into prompt embeddings that are suitable to be used as inputs into the LLM alongside the user text inputs. The LLM model then leverages the prompt embeddings, image inputs, and user-provided textual inputs to detect anomalies, and pinpoint their location, and create end-responses for the user.

Decoder

To achieve pixel-level anomaly localization, the AnomalyGPT model deploys a lightweight feature matching based image decoder that supports both few-shot IAD frameworks, and unsupervised IAD frameworks. The design of the decoder used in AnomalyGPT is inspired by WinCLIP, PatchCore, and APRIL-GAN frameworks. The model partitions the image encoder into 4 stages, and extracts the intermediate patch level features by every stage.

However, these intermediate features have not been through the final image-text alignment which is why they cannot be compared directly with features. To tackle this issue, the AnomalyGPT model introduces additional layers to project intermediate features, and align them with text features that represent normal and abnormal semantics.

Prompt Learner

The AnomalyGPT framework introduces a prompt learner that attempts to transform the localization result into prompt embeddings to leverage fine-grained semantics from images, and also maintains the semantic consistency between the decoder & LLM outputs. Furthermore, the model incorporates learnable prompt embeddings, unrelated to decoder outputs, into the prompt learner to provide additional information for the IAD task. Finally, the model feeds the embeddings and original image information to the LLM.

The prompt learner consists of learnable base prompt embeddings, and a convolutional neural network. The network converts the localization result into prompt embeddings, and forms a set of prompt embeddings that are then combined with the image embeddings into the LLM.

Anomaly Simulation

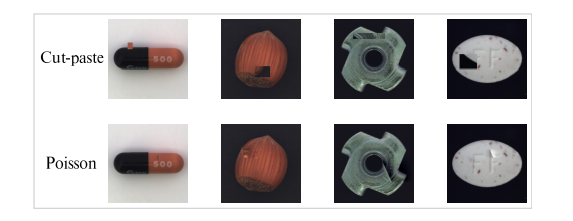

The AnomalyGPT model adopts the NSA method to simulate anomalous data. The NSA method uses the Cut-paste technique by using the Poisson image editing method to alleviate the discontinuity introduced by pasting image segments. Cut-paste is a commonly used technique in IAD frameworks to generate simulated anomaly images.

The Cut-paste method involves cropping a block region from an image randomly, and pasting it into a random location in another image, thus creating a portion of simulated anomaly. These simulated anomaly samples can enhance the performance of IAD models, but there is a drawback, as they can often produce noticeable discontinuities. The Poisson editing method aims to seamlessly clone an object from one image to another by solving the Poisson partial differential equations.

The above image illustrates the comparison between Poisson and Cut-paste image editing. As it can be seen, there are visible discontinuities in the cut-paste method, while the results from Poisson editing seem more natural.

Question and Answer Content

To conduct prompt tuning on the Large Vision Language Model, the AnomalyGPT model generates a corresponding textual query on the basis of the anomaly image. Each query consists of two major components. The first part of the query consists of a description of the input image that provides information about the objects present in the image along with their expected attributes. The second part of the query is to detect the presence of anomalies within the object, or checking if there is an anomaly in the image.

The LVLM first responds to the query of if there is an anomaly in the image? If the model detects anomalies, it continues to specify the location and the number of the anomalous areas. The model divides the image into a 3×3 grid of distinct regions to allow the LVLM to verbally indicate the position of the anomalies as shown in the figure below.

The LVLM model is fed the descriptive knowledge of the input with foundational knowledge of the input image that aids the model’s comprehension of image components better.

Datasets and Evaluation Metrics

The model conducts its experiments primarily on the VisA and MVTec-AD datasets. The MVTech-AD dataset consists of 3629 images for training purposes, and 1725 images for testing that are split across 15 different categories which is why it is one of the most popular dataset for IAD frameworks. The training image features normal images only whereas the testing images feature both normal and anomalous images. On the other hand, the VisA dataset consists of 9621 normal images, and nearly 1200 anomalous images that are split across 12 different categories.

Moving along, just like the existing IAD framework, the AnomalyGPT model employs the AUC or Area Under the Receiver Operating Characteristics as its evaluation metric, with pixel-level and image-level AUC used to assess anomaly localization performance, and anomaly detection respectively. However, the model also utilizes image-level accuracy to evaluate the performance of its proposed approach because it uniquely allows to determine the presence of anomalies without the requirement of setting up the thresholds manually.

Results

Quantitative Results

Few-Shot Industrial Anomaly Detection

The AnomalyGPT model compares its results with prior few-shot IAD frameworks including PaDiM, SPADE, WinCLIP, and PatchCore as the baselines.

The above figure compares the results of the AnomalyGPT model in comparison with few-shot IAD frameworks. Across both datasets, the method followed by AnomalyGPT outperforms the approaches adopted by previous models in terms of image-level AUC, and also returns good accuracy.

Unsupervised Industrial Anomaly Detection

In an unsupervised training setting with a large number of normal samples, AnomalyGPT trains a single model on samples obtained from all classes within a dataset. The developers of AnomalyGPT have opted for the UniAD framework because it is trained under the same setup, and will act as a baseline for comparison. Furthermore, the model also compares against JNLD and PaDim frameworks using the same unified setting.

The above figure compares the performance of AnomalyGPT when compared to other frameworks.

Qualitative Results

The above image illustrates the performance of the AnomalyGPT model in unsupervised anomaly detection method whereas the figure below demonstrates the performance of the model in the 1-shot in-context learning.

The AnomalyGPT model is capable of indicating the presence of anomalies, marking their location, and providing pixel-level localization results. When the model is in 1-shot in-context learning method, the localization performance of the model is slightly lower when compared to unsupervised learning method because of absence of training.

Conclusion

AnomalyGPT is a novel conversational IAD-vision language model designed to leverage the powerful capabilities of large vision language models. It can not only identify anomalies in an image but also pinpoint their exact locations. Additionally, AnomalyGPT facilitates multi-turn dialogues focused on anomaly detection and showcases outstanding performance in few-shot in-context learning. AnomalyGPT delves into the potential applications of LVLMs in anomaly detection, introducing new ideas and possibilities for the IAD industry.

The post AnomalyGPT: Detecting Industrial Anomalies using LVLMs appeared first on Unite.AI.