Skip to content

People solve new problems readily without any special training or practice by comparing them to familiar problems and extending the solution to the new problem. That process, known as analogical reasoning, has long been thought to be a uniquely human…

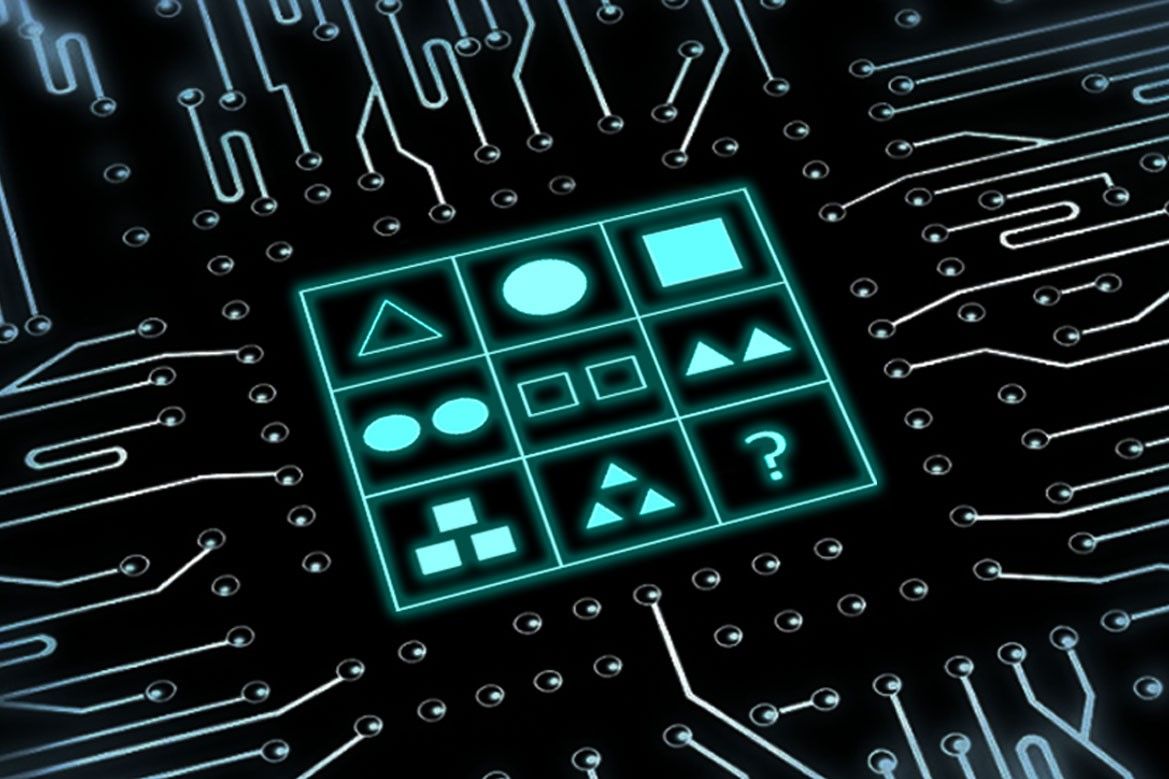

Published recently in Nature Machine Intelligence, research addressed the limited availability of human annotated, or labeled, medical images by using an adversarial, or competitive, learning approach against unlabeled data.…

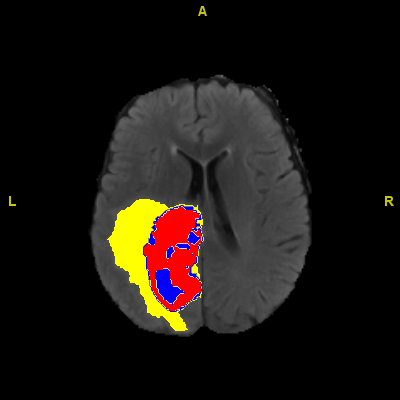

OpenAI's widely celebrated large language model has been hailed as "quite simply the best artificial intelligence chatbot ever released to the general public" by Kevin Roose, author of "Futureproof: 9 Rules for Humans in the Age of Automation" and as…

A team of computer scientists and designers based out of the University of Waterloo have developed a tool to help people use color better in graphic design. The research paper, "Facilitating Graphics Design with Interactive 2D Color Palette Recommendation," was…

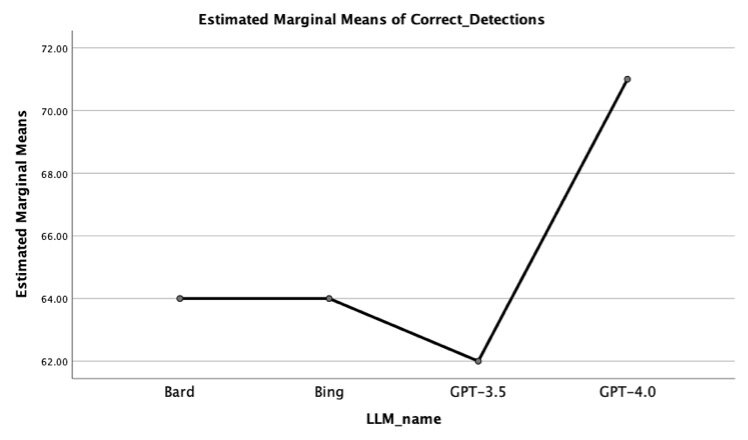

A researcher at Ben-Gurion University of the Negev has designed an AI system that identifies social norm violations. The project is one of the first to tackle the automatic identification of social norm violations. While many social norms exist worldwide,…

Memories can be as tricky to hold onto for machines as they can be for humans. To help understand why artificial agents develop holes in their own cognitive processes, electrical engineers at The Ohio State University have analyzed how much…

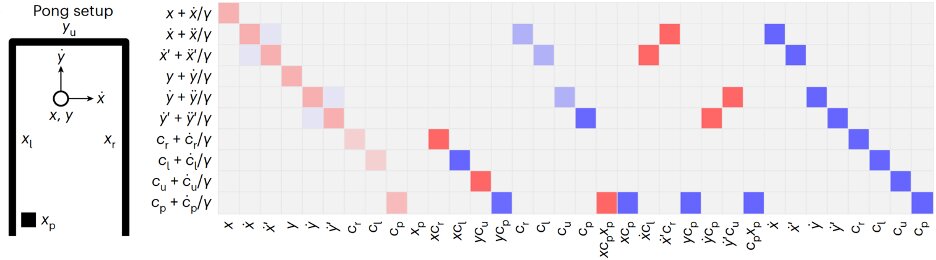

Despite their huge success, the inner workings of large language models such as OpenAI's GPT model family and Google Bard remain a mystery, even to their developers. Researchers at ETH and Google have uncovered a potential key mechanism behind their…

A new "stress test" method created by a Georgia Tech researcher allows programmers to more easily determine if trained visual recognition models are sensitive to input changes or rely too heavily on context clues to perform their tasks.…

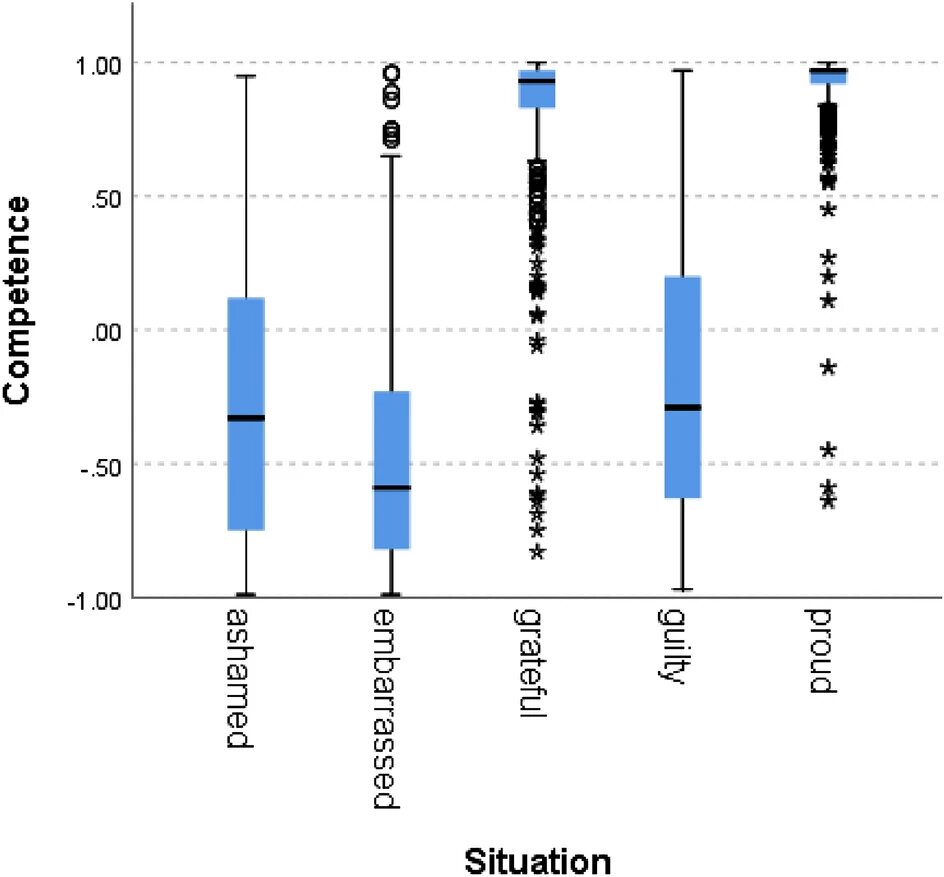

How and why we make thousands of decisions every day has long proven to be a popular area of research and commentary.…

Large language models (LLMs) are an evolution of natural language processing (NLP) techniques that can rapidly generate texts closely resembling those written by humans and complete other simple language-related tasks. These models have become increasingly popular after the public release…

How does the human brain navigate complex circumstances—say, driving through Harvard Square traffic at 5 p.m.?…

Until recently, 3D surface reconstruction has been a relatively slow, painstaking process involving significant trial and error and manual input. But what if you could take a video of an object or scene with your smartphone and turn it into…

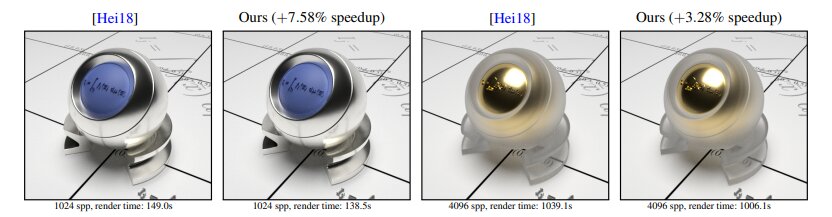

Setting its sights on evolving graphics processing units in a growing universe of generative AI, Intel announced the release of several papers outlining efforts it is pursuing in what observers say is a multibillion-dollar opportunity in coming years for the…

Reservoir computing is a promising computational framework based on recurrent neural networks (RNNs), which essentially maps input data onto a high-dimensional computational space, keeping some parameters of artificial neural networks (ANNs) fixed while updating others. This framework could help to…

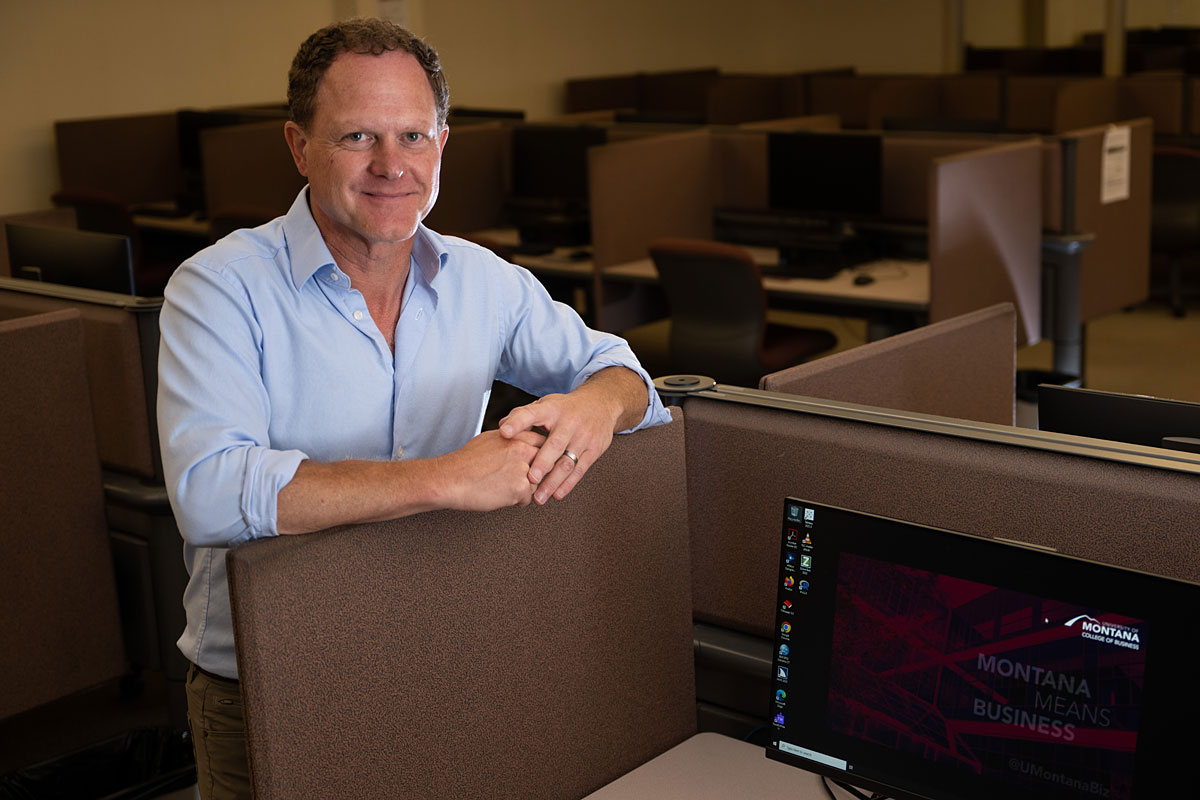

New research from the University of Montana and its partners suggests artificial intelligence can match the top 1% of human thinkers on a standard test for creativity.…

Chart captions that explain complex trends and patterns are important for improving a reader's ability to comprehend and retain the data being presented. For people with visual disabilities, the information in a caption often provides their only means of understanding…

Language models like ChatGPT are making headlines for their impressive ability to "think" and communicate like humans do. Their feat of achievements so far includes answering questions, summarizing text, and even engaging in emotionally intelligent conversation.…

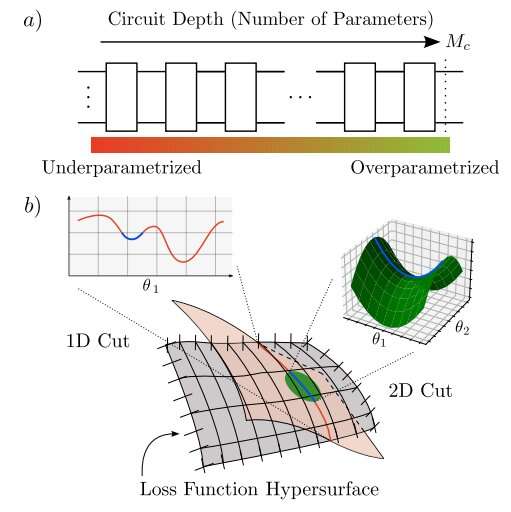

A theoretical proof shows that a technique called overparametrization enhances performance in quantum machine learning for applications that stymie classical computers.…

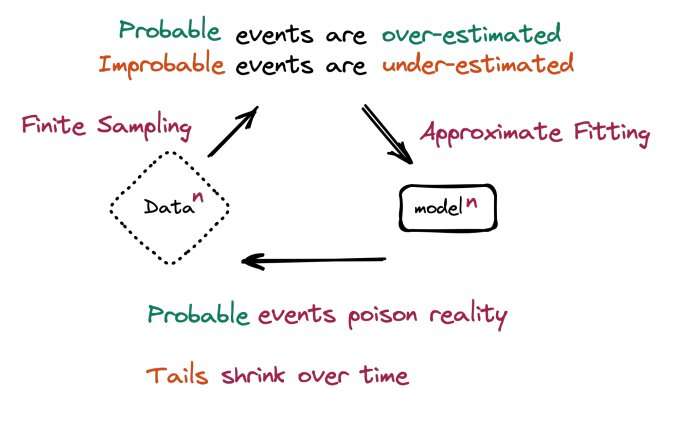

Large language models are generating verbal pollution that threatens to undermine the very data such models are trained on.…

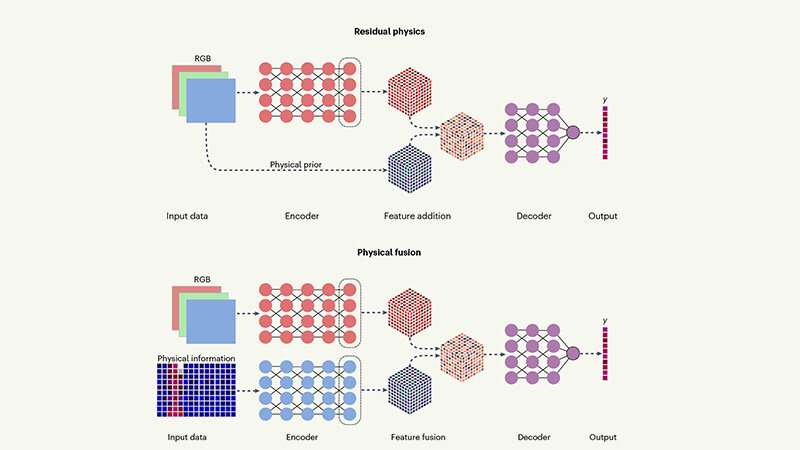

Researchers from UCLA and the United States Army Research Laboratory have laid out a new approach to enhance artificial intelligence-powered computer vision technologies by adding physics-based awareness to data-driven techniques.…

文 » A

Scroll Up

×

![]()